The last months have been pretty exciting for those following AI research developments. I thought I’d capture some of the thoughts that are going around during this whirlwind.

The End of the Scaling Era

At the end of November top researchers announced that LLM progress had "hit a wall". In short, for the first time scaling neural nets larger and larger was yielding diminishing returns. This was celebrated by naysayers, claiming they'd been predicting this for years.

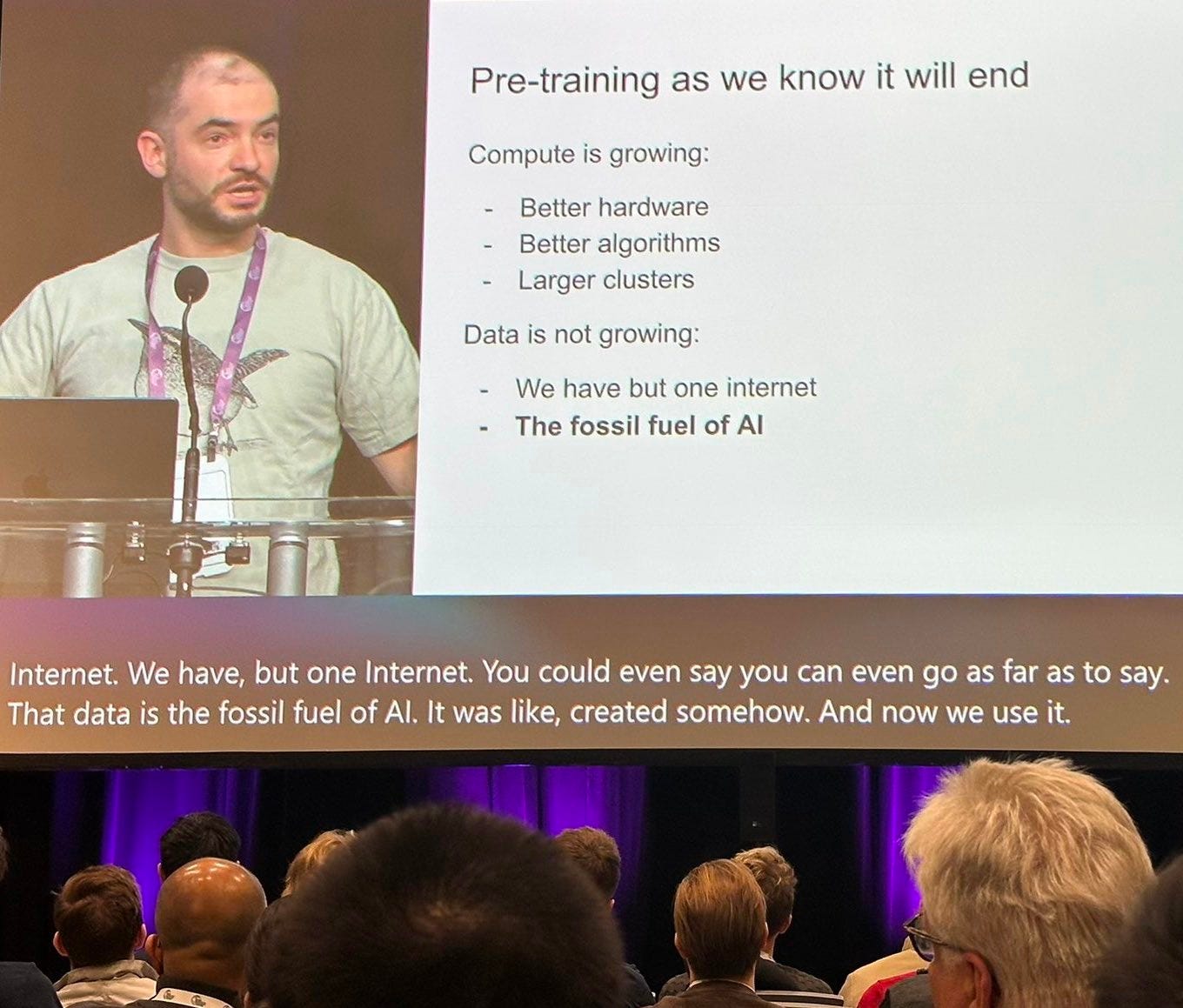

At NeurIPS, the largest AI conference, Ilya Sutskever - who served as OpenAI's chief scientist, helped launch the deep learning revolution with Hinton in 2012, championed the "scale is all you need" philosophy, and won the last three NeurIPS test-of-time paper awards - announced that the "pre-training era" was now over and a new paradigm was needed.

Within 2 weeks, OpenAI released their o3 model, showing that this new direction of development was now unlocked, with impressive results on reasoning performance (including very very hard benchmarks), the current achilles heel of these large models.

Understanding LLM Reasoning

While Large Language Models excel at pattern matching, they have been notoriously bad at planning, or even simple reasoning tasks (this include simple maths, like counting things). Largely it's because it's simply not the kind of things they've been trained to do until now.

The basic training paradigm of LLMs is to learn to predict the next word, given the many words that have come before it. To become very good at this task, it's a good idea to start representing things about the world in a rich and structured way.

Eventually, when trained for humongous amounts of time and on humongous amounts of data, these models end up capturing the dataset they've been trained on, and can generate coherent and useful answers. Bear in mind that this is very different from how the human minds learns -- we need very few examples to learn about new things, and we can actively direct our own learning by asking questions about the world, and conducting small experiments like every-day scientists, incrementally building internal models of the world.

While LLM models have been trained to represent the knowledge in the data very richly, they have not been trained to "perform operations" on that knowledge.

Because of this, it makes sense to think of LLMs as excellent "approximate knowledge retrievers" on the data they have been trained on.

As soon as one asks about something that's outside the data, then model will tend to make things up (or confabulate).

Because these systems have been trained on huge amounts of relatively intelligent data that contains plans, and reasoning, it might give the idea that they have these capabilities. But as the complexity of a problem grows, so does the model's likelihood of turning into total confabulation. While the output might sound like plausible things, the sensibility of the output starts to derail quickly.

One of the difficult things when discussing cognitive technology amidst massive hype cycles, is that the technical words that are being used can trigger misconceptions for non-experts. “LLMs have learned to reason” can indeed be quite misleading. Basically, it means that the text generated by LLMs is supported by some logical scaffolding.

Let’s explain more specifically what is meant by reasoning here.

Example of a reasoning task

Imagine you're trying to solve this simple math problem: "If I have 7 apples and get 5 more, how many do I have?"

An LLM's training basically teaches it: "After 'If I have 7 apples and get 5 more, how many do I have?' humans often write 'I have 12 apples'" or "The answer is 12." It learns this pattern association, but it doesn't learn to perform the actual addition operation.

This becomes clearer with a slightly more complex problem: "If I have 7 apples, then give 3 to my friend, then buy twice as many as I have left, how many do I have?"

A human would:

1. Start with 7

2. Subtract 3 (= 4)

3. Double 4 (= 8)

But an LLM might struggle because it's looking for patterns in text rather than learning to execute a sequence of operations. It hasn't been explicitly trained to break down problems into steps and execute them sequentially.

Perhaps one way to think about it is that the models have been designed in a way that is not sensitive to whether something is true, but whether something "sounds" true. So the focus has been more on the external validity of something, rather than it's "internal" validity. Since it's still early days, it's impressive how useful this first iteration has been.

Now the new family of models has figured out that you can teach models how to operate on that knowledge if you train the model on generating "reasoning steps", a kind of stream of consciousness about the task, and leverage that "chain of thought" to improve the accuracy of the model's response.

So instead of directly generating the predicted words (e.g. "I have 12 apples"), the model is initially trained on breaking down the problem and generating the reasoning steps. These steps are also called "chain of thought".

In our example this would look like the CoT we mentioned earlier:

1. Start with 7

2. Subtract 3 (= 4)

3. Double 4 (= 8)

This is what is meant by reasoning.

First results from scaling reasoning

What has been impressive with this new direction, where compute can be scaled "at test-time" to improve the performance of models (i.e. you can produce longer chains-of-thoughts), is that the recipe is fairly simple. The way to achieve this has been to further train the model by giving it a reward signal on reasoning tasks, with the goal of producing better reasoning traces to improve the responses. The reward signal can either be the correct answer, or it can be more granular by evaluating intermediate steps rather than only final outputs with a Process Reward Model.

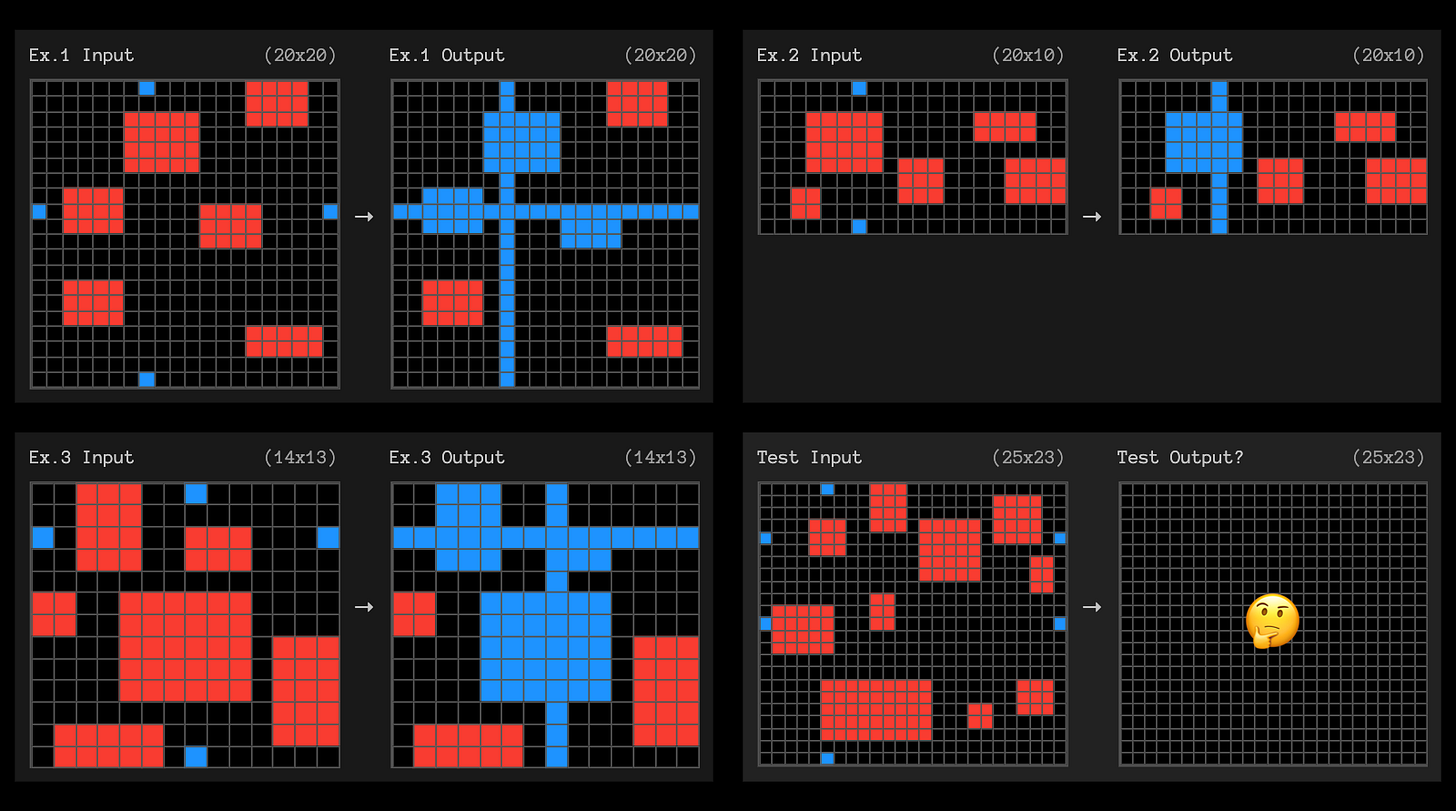

The o3 results on programming and mathematical reasoning tasks are impressive. The final results presented by OpenAI were on a "cognitive science"-inspired task, the ARC challenge, focused on abstract visual reasoning tasks. It's an interesting benchmark because these puzzles are solved intuitively by humans but were unachievable by previous LLM models. For the first time now with o3, a general model is able to outperform the average human performance. (cf. here)

The current benchmarks have been strongly focused on performance on maths problems, and software engineering (fixing programming bugs), which involve a lot of complex reasoning steps.

Despite this success, it's interesting to see o3 fail on some puzzles that people find very simple (see pic below). "o3 can wow the Fields Medalists, but still fail to solve some 5-yr-old puzzles" (tweet).

Ethan Mollick refers to this as the jagged frontier, describing how LLMs are very good at very difficult things, and very poor at seemingly simple ones. This is why Yann LeCun (Chief of AI at Meta) can claim that LLMs are “dumber than a cat” when asked if we should be afraid of AI progress ("pardon my french, it's bullshit"), while them also solving PhD level maths problems.

“We are used to the idea that people or entities that can express themselves, or manipulate language, are smart—but that’s not true,” says LeCun. “You can manipulate language and not be smart, and that’s basically what LLMs are demonstrating.” ~ Yann LeCun

The DeepSeek Breakthrough

To finish the year off, DeepSeek, a Chinese company, showed that they could match the performance of the best models with 10-100x less compute, releasing their v3 model (matching GPT-4o and Claude 3.5 sonnet on the main benchmarks). Then, in January 2025 (last week), they released their r1 model, matching the performance of the best openAI models (o1, o3 - who introduced chain-of-thought), joining the family of "reasoning" models.

The R1 release happened as Trump announced the Stargate project, a $500 billion investment in AI infrastructure in the US, led by OpenAI. The R1 release led to an almost $600 billion crash in Nvidia’s market value.

Part of why the DeepSeek release was seen as such a significant event is because since the start of OpenAI, the main narrative has been that performance basically comes down to scaling compute and data (see for example “the bitter lesson” by a legendary Reinforcement Learning Professor in 2019). This "scale is all you need" narrative has also helped fuel the massive investments in companies like OpenAI.

Historical side-note: "scale is all you need" was the scientific belief that led to the success of neural networks today, with the first breakthrough in 2012 and AlexNet, It’s held up ever since, culminating with the boom of ChatGPT and LLMs, and it's unsurprising that it's strongly held today!

Many people have been led to believe that huge amounts of money and compute would create a "moat". The thinking was that only the most resourced companies could afford to build these models, allowing them to maintain market dominance through their superior offerings. DeepSeek has shaken this belief and perhaps put this VC/American hubris in check.

More than matching the performance of OpenAI's best models, they've also released the full training recipe, and the neural network weights. This means that anyone has access to these models. The efficiency of their training process also means that the cost of running these models is orders of magnitude cheaper than they were. The "compute is all you need" and "compute is the moat" narrative is essentially being replaced by "intelligence will be cheap and for everyone".

Looking Ahead: Implications & Challenges

Overall, the trend seems fairly clear: 1) LLM models will become increasingly strong at reasoning 2) increasingly efficient and 3) things are moving very fast. In the first week of January, a small model was able to match the best large models at math reasoning tasks through this new paradigm (tweet).

Undoubtedly, 2025 will be another record year for AI progress. When things go so fast, it's perhaps wise to take a step back!

First, for the research community I think it's important to think about their contribution.

At NeurIPS, the writer Ted Chiang closed his talk at the creativity and generative AI workshop with the following quote:

"How is what you're doing different from geologists working in the oil industry? Are you using your knowledge of geology to make the world a better place?".

Every month of 2024 was the warmest ever recorded, and the start of the year is only making the crisis we're in glaringly obvious. Building loads of data centres and calling for more compute might not be the only move available in our quest for more intelligence! (see this thread by Karen Hao)

For people further from the field, I think it's interesting to reflect on the role of knowledge in our lives. For centuries, having access to knowledge was difficult, and often a mark of privilege, or at least of important effort. Now knowledge is at our fingertips more than ever. I'd argue intelligence is moving away from having knowledge and increasingly towards choosing the right problems to solve. How and when to use that knowledge, or how to successfully implement intelligent ideas in the real world will remain a difficult task. And because of this, it will take time for AI innovation to cascade into the physical world, because it will always be combined with the messy human stuff (mindsets and resistance to change, overcoming existing systems and processes etc).