summary of last episode: At the end of 2024, AI development reached a significant turning point. The traditional approach of scaling language models larger had begun showing diminishing returns. Since then, researchers have been focused on developing more sophisticated ways for AI models to systematically reason through problems, rather than simply scaling up model size or using brute force approaches. The key insight has been that models can achieve better results by explicitly showing their work through step-by-step problem solving, combined with more efficient ways to explore possible solutions.

I wrote a bit about reasoning in LLMs is in the last post (go there for an intro), but since it's probably going to be the theme of AI research for the next couple of months I thought I'd write about it a little bit more.

In this post, I want to explore some of the questions that this moment raises: What do we mean by reasoning in AI systems? Can studying how humans think and solve problems still teach us something valuable in this new era of AI development?

I try to bring some historical context, and explore how this tension between understanding how people think and designing systems to help us think has played out many times throughout history, often leading to unexpected breakthroughs.

Can LLMs reason or plan? feat. Subbarao Kambhampati

One of the brightest voices on reasoning and Large Language Models has been Subbarao Kambhampati - he's published a number of great papers on the capabilities of LLMs on planning problems, making it clear that no, they cannot reason or plan. This strong no was before the recent new wave of “inference-time scaling” approaches, that have focused on developing these capabilities.

Overall, Kambhampati has also been a great critic of AI hype, and a voice of reason since the start of this frenzy.

Planning is a great place to study reasoning, because it's a formal framework, and plans can be compared and measured, and you can clearly say whether a plan is valid or not. In many other areas of reasoning, things are sometimes a bit more hazy.

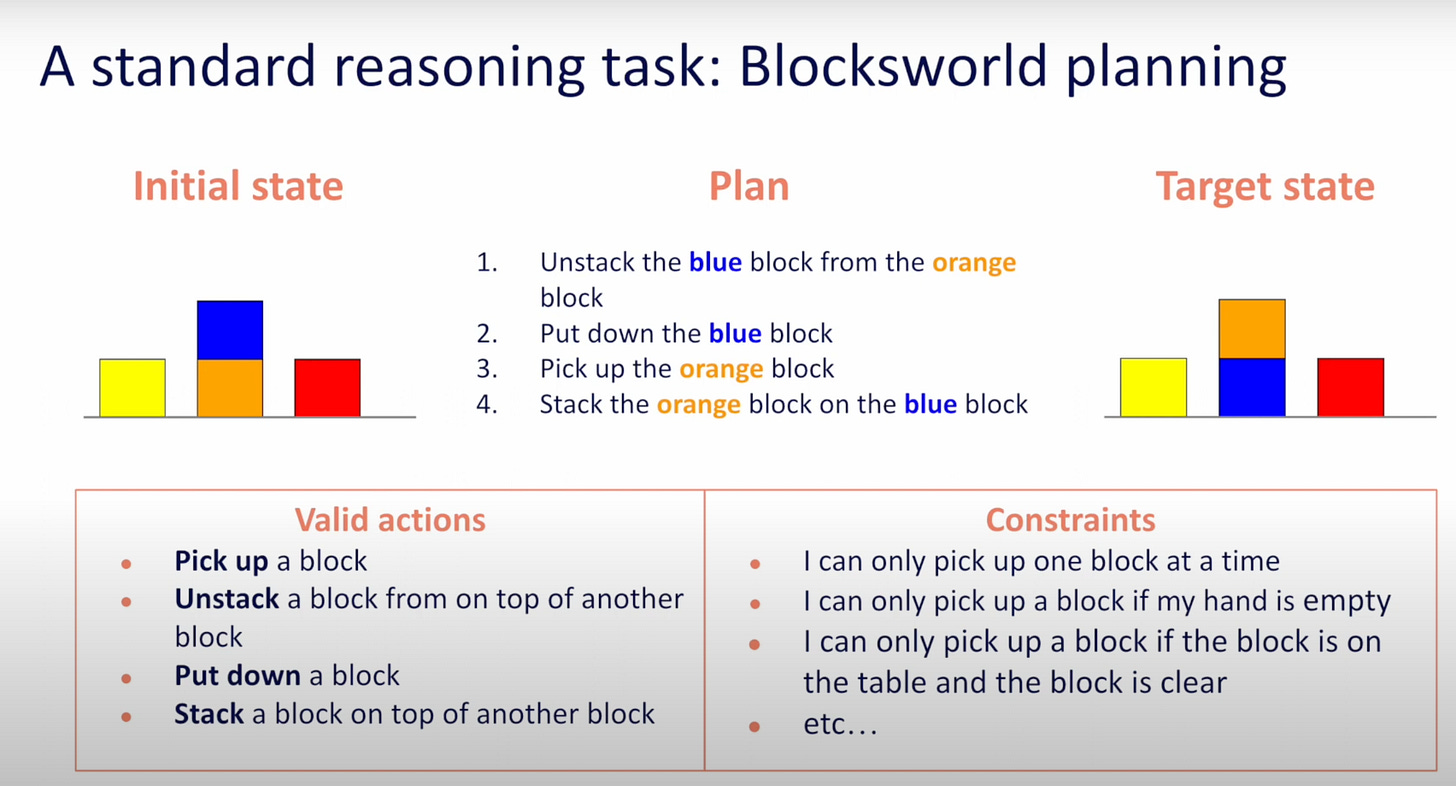

One example of a planning task is the "Blocks World" Planning Task. It's designed to test LLMs' ability to do multi-step planning and understand spatial relationships. The task involves manipulating blocks labeled with letters or numbers to achieve a target configuration. A typical prompt might ask the model to determine the sequence of moves needed to transform an initial state (like blocks stacked as "A B C") to a goal state (like "C B A"). Until now, these kinds of tasks have been important to show that LLMs can’t innately plan [1, 2].

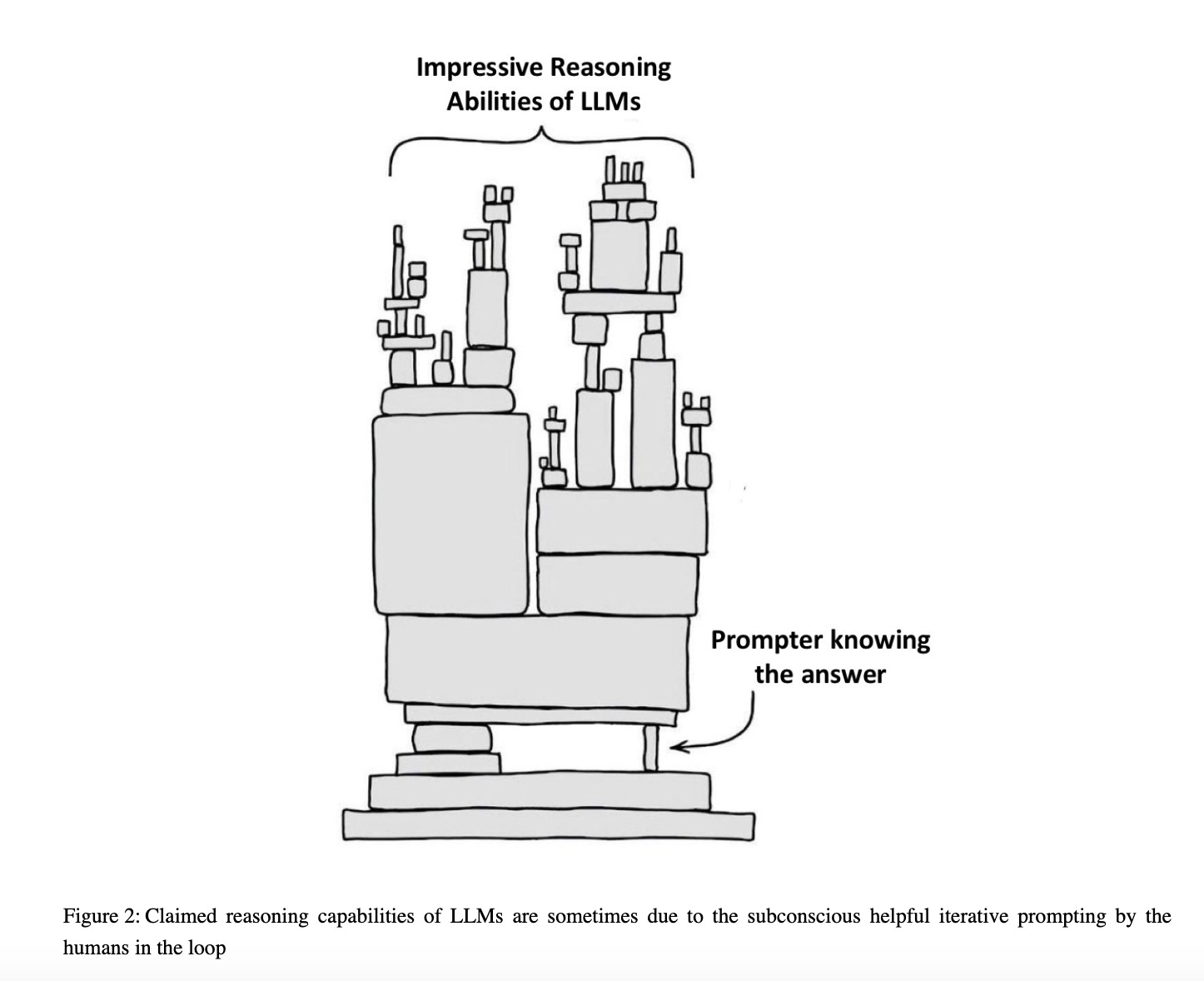

Here's one of Kambhampati’s takes on the "so-called reasoning abilities of LLMs" back in late 2023 (the pre-reasoning era).

Since late 2024, new "inference time scaling" approaches have emerged, showing promising improvements in LLMs' reasoning capabilities.

It is quite fun to think that there's probably never been a time in history where so many people have been thinking about reasoning. Most of these AI researchers are also typically not cognitive scientists by training (likely coming from the fields of computer science, machine learning, physics, mathematics, statistics), so I like the idea that their varied intellectual paths are akin to a long reasoning process that has led them to think about reasoning.

An interdisciplinary convergence on reasoning if you will - hopefully that makes sense.

Anyway!

This rapid evolution has transformed the landscape that Kambhampati originally critiqued. In response to these developments, Kambhampati asks a fundamental question: "What is reasoning?"

It is indeed a good place to start if we want to understand whether LLMs do reason.

Kambhampati is quite clear on the fact that we do not know what human reasoning is (“cognitive scientists don’t know, psychologists don’t know”).

He argues that throughout history, progress has come not from studying how humans reason, but from establishing sound logical principles and patterns. Citing a number of influential milestones and frameworks, like Aristotle's syllogisms logic etc, he says we have moved forward not by asking "What do humans do?" but by asking "What are sound principles? What are sound reasoning patterns?". As a planning researcher, Kambhampati emphasizes the need for guarantees in deployed AI systems. He points out that while humans can make mistakes, there are consequences - "if you are being paid to make decisions, you make mistakes, there are penalties for you." Until we can establish similar accountability for AI systems, he argues we should focus on formal definitions of reasoning with clear correctness criteria, rather than trying to replicate the uncertain patterns of human cognition.

However, this emphasis on formal guarantees, while crucial for certain applications, might not be the complete picture when it comes to today's language models. LLMs are fundamentally different from traditional planning systems - they operate in open-ended, natural language environments where formal guarantees aren't always possible or even desirable. When doing exploratory research, exploring new idea spaces, or solving novel problems, open-minded, creative reasoning processes might be much more useful.

I see two important counterpoints to Kambhampati's position:

-

First, I feel that it's pretty hard, as a human, to think about human reasoning patterns and take the human out of the picture.

-

Second, we've learned a lot from studying people, both when they do things right but also where they go systematically wrong. Often, the heuristics and biases we intuitively use to learn about the world and act within it reveal extremely efficient learning strategies.

Overall, I feel that his position overlooks the crucial role that studying human reasoning has played in developing our most important formal frameworks. With all the attention that AI is currently getting, it's easy to forget that many transformative scientific frameworks have emerged from studying how humans think and learn. In my opinion, the current technological wave is another example of this pattern, where thinking about human reasoning unlocks new ways to read the world.

A bit of history on the study of thought

To see why studying human reasoning matters, let's look at how some of our most important formal frameworks actually emerged. Time and time again, curiosity about how humans think led to the very kind of formal systems that Kambhampati champions

Here’s a non exhaustive walkthrough:

Probability Theory

Blaise Pascal transformed our understanding of evidence and uncertainty through his correspondence with Fermat about gambling problems. Their work on the "problem of points" - how to fairly divide stakes in an interrupted game - led to the first systematic mathematical treatment of probability and expected value. The problem was proposed to Pascal and Fermat, probably in 1654, by the Chevalier de Méré, a gambler who is said to have had unusual ability “even for the mathematics.” Pascal didn't start by asking 'what are sound principles?' He started by watching gamblers make decisions under uncertainty. From these observations of human reasoning about chance, he developed the formal mathematics of probability.

This mathematical framework represented a profound shift in European thought. Before 1660, "probability" simply meant the approval of authorities - a "probable" doctor was one deemed trustworthy, not one whose treatments showed statistical efficacy. Pascal's work helped transform probability from a matter of authority into a mathematical tool for studying patterns in nature.

Jacob Bernoulli would go on to develop the first systematic theory for applying probability beyond games of chance. He was fascinated by how humans reason about uncertainty, both in games of chance and legal proceedings. His masterwork "Ars Conjectandi" (published posthumously in 1713) explored how to quantify degrees of certainty in legal evidence and testimony, alongside mathematical probability. By studying how humans judge evidence and make decisions under uncertainty, he developed fundamental probabilistic frameworks including the law of large numbers, helping establish probability theory as a mathematical discipline. It is often said to be the founding work in the calculus of probability. His work had the double of aim of describing how people reason about uncertainty, but also to instruct how people ought to reason intelligently about probabilities.

In a similar vein, Thomas Bayes was intrigued by how humans update their beliefs when faced with new evidence. His work trying to formalise this process of belief updating led to Bayesian inference - a framework that has proven remarkably powerful not just for statistics, but for understanding learning and inference more broadly. His essay was published posthumously in 1763.

What started as an attempt to understand human belief updating became a cornerstone of modern statistical reasoning. As McGrayne writes in her book “The Theory That Would Not Die”, on the history of Bayes’ theorem:

It solved practical questions that were unanswerable by any other means: the defenders of Captain Dreyfus used it to demonstrate his innocence; insurance actuaries used it to set rates; Alan Turing used it to decode the German Enigma cipher and arguably save the Allies from losing the Second World War; the U.S. Navy used it to search for a missing H-bomb and to locate Soviet subs; RAND Corporation used it to assess the likelihood of a nuclear accident; and Harvard and Chicago researchers used it to verify the authorship of the Federalist Papers.

Computation and Logic

George Boole explicitly set out to capture the "laws of thought" in mathematical form. His work "The Laws of Thought", published in 1854, was an ambitious attempt to model human logical reasoning algebraically. His effort to formalise human reasoning processes became Boolean algebra - the foundation of digital logic and information processing.

Alan Turing approached the question of computation by carefully observing how humans calculate. His foundational work on computability, published in 1936, came from modelling how a human "computer" (which was a job title back then) would work through mathematical problems with paper and pencil, making systematic marks and following rules. This human-inspired model became the theoretical foundation for modern computing.

Learning, Language and Representations

Noam Chomsky's observations about how children acquire language - seemingly effortlessly learning complex grammatical rules without explicit instruction - led him to develop formal grammars, while kickstarting the broader cognitive revolution of the 1950s. His insight that language could be described through mathematical principles revolutionised both linguistics and computer science. The Chomsky hierarchy of formal grammars became foundational for compiler design and programming languages, while his ideas about rule-based pattern generation influenced early natural language processing. His work showed how studying a fundamental human capacity could lead to powerful formal theories with practical applications.

Geoffrey Hinton was fascinated by how knowledge might be represented in distributed patterns in the mind. His journey started in his mid-teens in the 1960s, when a school friend introduced him to the concept of holograms – where information is spread across the entire medium rather than localised in specific points. This idea that memory and meaning might be distributed throughout the brain "like a hologram" sparked his lifelong work on neural networks and distributed representations. Driven by this deep curiosity about how the brain works, he found himself dissatisfied with traditional explanations, leading him to switch between various subjects at Cambridge, before eventually finding his path in artificial intelligence. His persistence through decades of skepticism about neural networks eventually led to breakthroughs that transformed machine learning and our understanding of how information might be organised in both artificial and biological systems. These insights became foundational to the deep learning revolution of the 2010s and the current development of Large Language Models.

In each case, studying human cognition led to formal frameworks, which enabled new technologies, which in turn gave us new ways to understand the world. This pattern has repeated throughout history, each iteration giving us new tools and perspectives. So when we look at current debates about LLMs and reasoning, it's worth remembering this productive cycle between studying human thought patterns and developing formal frameworks.

All (reasoning) models are wrong, but some are useful.

Circling back to where we are today, and the current frontier of Large Language Models quickly learning how to reason.

As is often the case when moving words from a technical context to a lay audience, the word reasoning may be a bit misleading due to the connotations it carries in everyday speak, especially when combined with the AI hype that’s happening. A common misunderstanding is the idea that these models are now able to “think for themselves”, i.e. are capable of independent thought.

So what is reasoning? In the case of LLMs, thinking (or perhaps more accurately information processing) means that there is a pattern of activation of (artificial) neurons that leads to the generation of a sequence of words. Reasoning might imply that this sequence of words is principled, correct or even true. When thinking about thinking and decision-making in the real world, however, it might be more useful to have a notion of truth that is approximate, ambiguous and perhaps imperfect.

A simpler framework to explain reasoning could be that it is a process that leads from a state A (where we are, what we know, what we have) to a state B (what we want, or where we want to be), in idea space. You could describe it as goal-directed text processing.

Designing strong benchmarks to measure the progress of models on reasoning tasks is perhaps what has had the biggest impact so far on their abilities. By using these benchmarks, we're training the models to move from A (the problem statement) to B (the problem solution). Maybe unsurprisingly, it's mainly in mathematics and programming that we've been measuring reasoning progress. Of course, it's easier to move in a principled way in a principled world. But solving reasoning for one specific type of tasks doesn’t mean Reasoning has been solved. These ways of moving through idea space, these "sound reasoning patterns" might be quite different from one domain to the next, from one expert to the next, or from one culture to the next.

A less misleading way to frame the recent progress to me is that neural networks are learning systems, and they can learn to be better at solving problems if we also train them to solve problems. LLMs initially were not trained to solve problems, they were only trained to model large datasets of human text.

While we can train models on sets of A and B states, the real world is full of problems where we don't know the solutions. So where might studying human reasoning lead us today? Perhaps to new ways of handling uncertainty in open-ended problems, or better approaches to creative problem-solving. While formal guarantees remain crucial for deployed systems, the way humans explore new ideas, make creative leaps, or navigate ambiguous situations could inspire the next breakthrough in AI reasoning.

I think one key insight here is that despite the current excitement about machines suddenly being able to think, we've been studying and formalising aspects of human thought for centuries. The challenge now isn't choosing between formal guarantees and human-inspired approaches, but in finding ways to combine both, just as Pascal, Turing, and others did before us.

It will be fascinating to see how far this reasoning revolution will go!